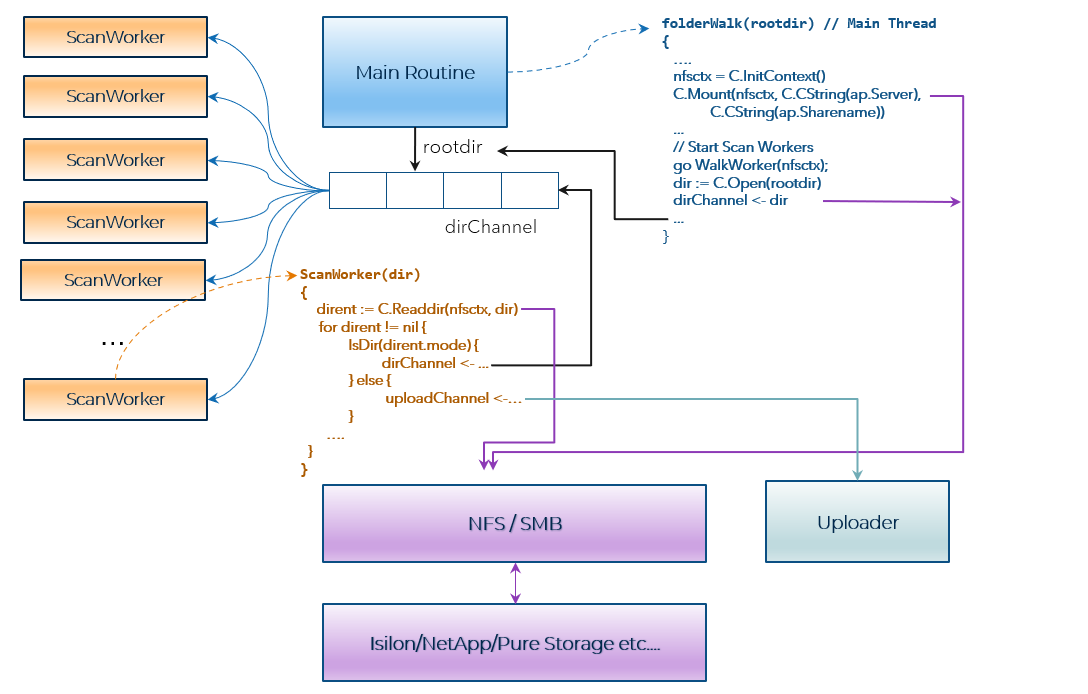

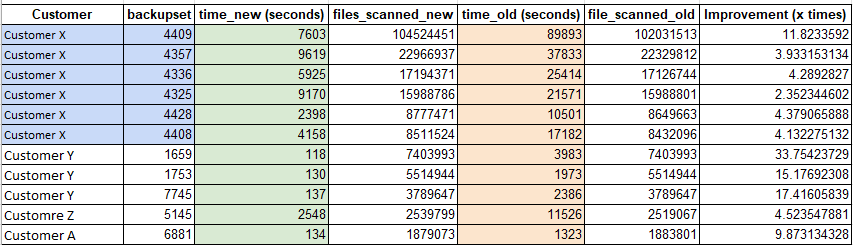

While the scan over NFS/SMB mount along with the above parallelism served us well, scanning speed was a concern for large enterprise customers with millions of files.

Smart Scan

In version 2, we added a capability called Smart Scan.

As indicated in the previous section, scanning the entire file system over NFS/SMB mount to detect changes during incremental backups can be very expensive, especially while backing up large file systems with millions of files. This scanning process also takes a significant amount of time.

Some NAS and storage array vendors provide snapdiff capabilities (such as Isilon ChangeList) to identify which files have changed between snapshots. The snapdiff feature is generally available only in high-end arrays, and often these capabilities are not exposed to all backup vendors. In the absence of capabilities like snapdiff, incremental backups rely on modification time to detect whether a folder or file has been changed since the last full or incremental backup. The problem with modification time is the modification time on the directory only changes if a new file is added or an existing file is deleted. If the file system scan during incremental backup skips directories based on modification time, it will miss the existing files that have been modified or appended since the last backup. On the other hand, if incremental backup reads entries inside every directory to identify changed files, backup performance suffers.

In large NAS environments, we observed that the change rate is typically 0.1% to 0.5% for many customers. A large number of directories stay the same in such environments. But if we ignore modification time on directories, we would end up scanning massive file systems.

With Smart Scan, we provided a couple of parameters to customers:

Parameter 1: Only scan files created/modified in the last 3/6/12/24 months

Parameter 2: Full Scan (scans all files including those that are unmodified during the specified duration and were skipped from Smart Scans.)

The Smart Scan algorithm relied on the age of the directories. The age of the directory is calculated as follows:

Age(Dir) = TimeNow() – Max ( ModificationTime(Dir), MaxModificationTime(Dir Children) )

But Smart Scan did not handle in-place modifications or append of files. The timestamp on the directory will not change in case of file in-place modification. It will be picked up only in the next full scan, which might be weeks away (4 weeks default). This could be an issue for customers with file types like database files, log files and other files where data gets appended on their NAS filers. The change rate is high in terms of in-place modification or append compared to new file addition/deletion.

Vendor API Integration - Isilon ChangeList

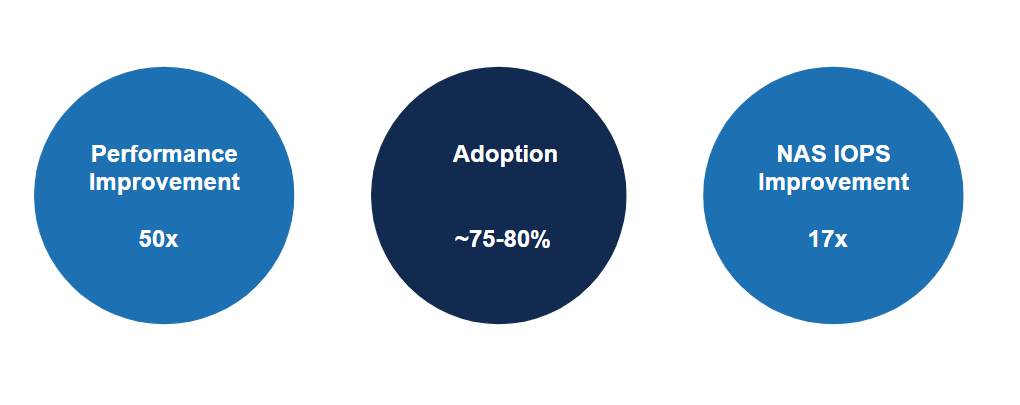

Vendors such as Isilon have made their snapshot API and ChangeList API available, enabling faster and more efficient file scanning. This had two enormous benefits:

The ChangeList API provided by Isilon OneFS returns changes that have happened to the underlying file system since the last snapshot. The ChangeList API requires SnapshotID feature to take point-in-time snapshots of the underlying file system. This dramatically reduces the scan time

The API also enables us to capture consistent point-in-time copies of the file system. The data is backed up from the snapshot; therefore, it's not changing as we traverse and back up the file system.

Incremental backups based on Isilon ChangeList use the previous snapshot either created as part of the full scan or incremental scan as a base reference in the incremental scan. If the incremental scan is successfully completed, the previous snapshot is deleted, and the current snapshot is retained as a base for the subsequent incremental scan.

Vendor API Integration - ChangeList Creation and Processing

The ChangeList API requires two snapshot IDs. It returns all changes between two snapshots. To get a ChangeList, a job needs to be created using the jobs API. The jobs API returns a job ID. ChangeList jobs can take time, depending on how much change they need to process. The status of the ChangeList job can be retrieved using the /snapshot/changelists API. Using this API, we poll for the status of the ChangeList job every 20/30 seconds until the status changes to “ready.” When the job is running, the status remains in “in progress” until the job ends when the status changes to “ready.”

Once the ChangeList job is complete, the actual ChangeList is retrieved using the following API:

GET https://1.2.3.4:8080/platform/8/snapshot/changelists/8_10/entries

{

"atime" :

...

"change_types" : [ "ENTRY_ADDED", "ENTRY_PATH_CHANGED" ],

...

"path" : "/000_Rename",

...

"user_flags" : [ "inherit", "writecache", "wcinherit" ]

},

Two fields highlighted above, (change_types and path) are of primary importance for determining the type of change.

Vendor API Integration - Processing a Large ChangeList

One of the challenges we faced during this project was that the ChangeList API did not return entries in sorted form. The entries come in random order. This might be due to the multi-threading inside the Isilon ChangeList job.

For example, assume the following 100 files were added to dir1/dir2 directory

f_000.data

f_001.data

f_002.data

….

f_099.data

The ChangeList output comes in random order, for example:

/dir1/dir2/f_040.data

/dir1/dir2/f_008.data

/dir1/dir2/f_023.data

/dir1/dir2/f_001.data

/dir1/dir2/f_015.data

/dir1/dir2/f_068.data

/dir1/dir2/f_027.data

…

This caused problems for us in parallel processing of the entries. One solution is to sort the entries in memory, but if the change contains millions of files, this puts a lot of pressure on the system's memory. To avoid memory issues, we could have used external merge sort. But instead of implementing this from scratch, we decided to use the BoltDB database. BoltDB provides a simple key/value store in Go. The output of ChangeList is inserted in BoltDB using batch insert. The path is used as a key, and the value is ChangeList entry. The ChangeList processing routine directly reads entries from BoltDB and processes them. We implemented a BoltDB wrapper to parallelize the reads from the BoltDB.

Enhanced Smart Scan

Some NAS vendors, like Isilon, provide snapdiff capabilities that directly provide changes to the file system since the last backup. But apart from Isilon, there are many vendors where either the change list capability is missing or the primary storage vendor is unwilling to make it available to backup vendors. As indicated in version 1 of our implementation, scanning over NFS and SMB mount is the only option when the vendor API is unavailable. But with traditional NFS and SMB mount, we saw that scan becomes a big bottleneck to backup. The number of files further aggravates the scan problem.

Legacy backup vendors solved this problem by doing volume-level backups, which avoids the scan phase altogether. But it makes file-level recovery difficult and often makes solutions dependent on the NAS vendor volume and file system format.

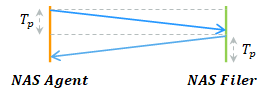

NFS and SMB are often implemented in the operating system kernel. The stat API to get file or directory attributes such as last modified time, last access time, ACLs, etc., is implemented by the POSIX library on Unix and Win32 API on Windows. While doing a file system scan, the backup application needs to read directory entries, and for each entry, a stat call is issued to check whether the file has been modified or not. This leads to performance bottlenecks for two main reasons: