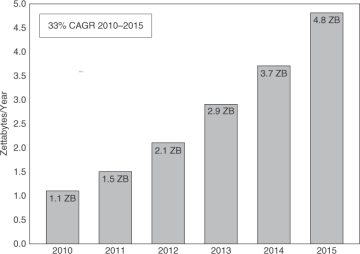

Figure 4-4. Data Center Traffic Quadruples from 2010 to 2015. Cloud Traffic Is Expected to Be Just over One Third of the Data Center Traffic in 2015.

(Source: Cisco Cloud Index)

NOTE: Cisco’s Global Cloud Index considers all provider and enterprise data centers, and includes the following traffic categories:

- Traffic that remains inside the data center

- Traffic between data centers

- Traffic from data center to end users over the Internet or IP WAN

Let’s try to put 1.6 zettabytes in perspective. This is the equivalent of 5 trillion hours of business web conferencing or 1.6 trillion hours of HD video streaming. Another interesting comparison is with the overall global Internet traffic, which in 2015 is expected to be just under 1 zettabyte, according to the Cisco Visual Networking Index (VNI).

In addition to the mind-boggling growth in traffic volumes, cloud applications, services, and infrastructure are responsible for transforming the pattern of data center traffic flows. Cloud-ready networks inside data centers, between data centers, and from data center to users will play an increasingly crucial role in terms of scaling efficiently to handle this growth in cloud data traffic and maintain profitability for the cloud providers without compromising the end-user experience.

Monetization

Earlier in this chapter, we discussed the role of the network in speeding up adoption of cloud services, providing solutions to the fundamental concerns that businesses have about wholeheartedly embracing the cloud. Cloud providers can leverage their network assets to enable their customers to confidently start moving more and more of their critical workloads to the cloud. On top of this, what if cloud providers could also directly monetize their network assets? What if networks and network services could be offered by the provider as a service; that is, network-as-a-service (NaaS)?

Along with compute and storage, networks and network services can be offered as a service, to be consumed, metered, and billed, based on usage. The economics of this model provide network vendors and cloud providers with strong incentives to innovate on compelling network services that add significant value for their customers.

The following are methods to offer networks and services for consumption.

Service Catalog

The discussion on cloud service management in Chapter 3, “Cloud Taxonomy and Service Management,” explained how cloud services, defined in the service catalog, are offered to customers through self-service portals or via application programming interface (API) access. In addition to including various predefined cloud services, the service catalog enables the flexibility to add or modify optional features for those services. The same service catalog provides a means to define and offer networking for consumption (ranging from a basic VLAN service to a complex network service that provides security across multiple data centers).

To include network services in the service catalog, they need to be abstracted and presented in a simplified manner to the customer who may not be a networking expert. The intricacies and complex operations involved in enabling the network service must be hidden from the customer. Simplification is key, and ordering NaaS should be as easy as a few clicks on the cloud portal or a small number of intuitive API calls.

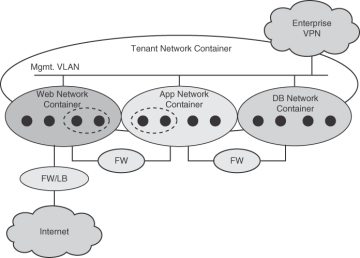

Here are a few examples of data center networking services, both basic and premium, that a provider could offer in their service catalog:

- Traffic isolation between tenants

- Access control between virtual machines (VM) of three-tier apps

- Load balancing across tiers of the three-tier apps

- Virtual private network (VPN) termination to isolated segments

- Quality of service (QoS) inside the data center fabric

The service catalog does not need to be restricted to network services inside the data center. After all, the end user consumes the cloud service from across the WAN (Provider IP NGN) or Internet. Cases where the cloud provider owns or controls network assets in the IP NGN present an opportunity to abstract network services available in the IP NGN bring it up to the service catalog. Examples of such services include the following:

- Virtual Private LAN Service/Multiprotocol Label Switching (VPLS/MPLS) VPN for private access to cloud

- WebVPNs for public access to cloud

- App performance enhancement with WAN acceleration, web caching

- Security through firewall, deep packet inspection (DPI), and distributed threat detection services in the NGN

- Optimal cloud services placement based on network proximity and performance

Not only do these NGN services open up additional revenue streams for the cloud provider, they also enable the provider to offer end-to-end security and performance capabilities. Certain network services such as firewall, QoS, and WAN application acceleration could potentially be distributed across the NGN and data center networks.

Network Services à la Carte

One option for monetization is to offer network services à la carte. Here network connectivity and services can be individually ordered by the consumer. The exact needs are conveyed as part of the API call or via a portal. For instance, if the developer needs to simply connect the database VM to an isolated virtual network segment that is not routable from the Internet but reachable from the web servers, those network attributes would be specified as part of the API invocation, as shown in the following pseudo API example:

- Create a DB network, specifying the following address range:

create_network(name="db-net", cidr="10.0.1.0/24")

- Attach the DB VM to the network created in Step 1:

attach_vm(vm=vm_uuid, network="db-net")

- Create a route to allow web servers to access the DB servers:

create_route("web-net","db-net", "local")

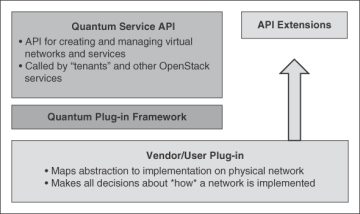

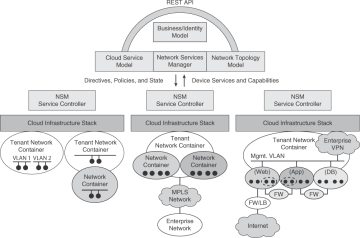

A well-designed API enables the users to easily describe what they want out of the network: for example, a network that supports a certain amount of bandwidth, a network with QoS, or perhaps a network with monitoring services. The APIs represent a contract to provide a certain service. While the underlying networking devices may differ, the functionality delivered by the API call is expected to be the same. In essence, a network hypervisor is needed. Analogous to the compute hypervisor, the network hypervisor would provide the ability to abstract the underlying networking hardware into services that can then be consumed by the user.

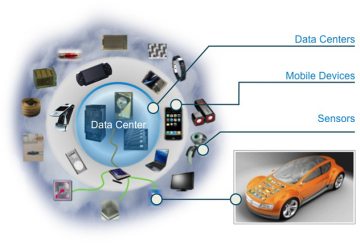

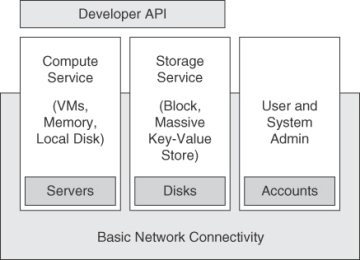

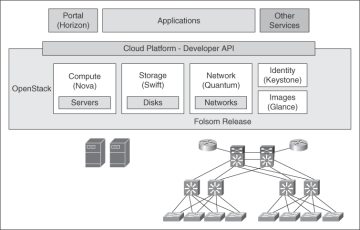

Not too long ago, though, developers did not have any visibility or control over the network, with infrastructure-as-a-service (IaaS) offerings focusing primarily on compute and storage, as illustrated in Figure 4-5. The network was there only to provide connectivity. Each VM would have a very flat view of the world, and there would not be any topology at all. Obviously, network services would not be available for consumption in such architectures.

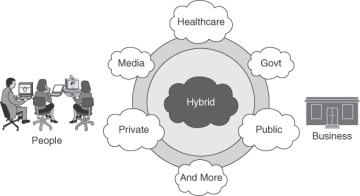

Cloud deployments have brought about game-changing benefits for both the providers and the consumers but continue to be challenged by certain inhibitors to adoption. Consider the case of an enterprise’s chief information officer (CIO) contemplating a move to the cloud. The cost and agility benefits offered by cloud deployments make it an attractive option for the organization. It allows the IT group to focus its limited resources on the core business of the company, enabling it to fund and undertake new projects with business impact.

Cloud deployments have brought about game-changing benefits for both the providers and the consumers but continue to be challenged by certain inhibitors to adoption. Consider the case of an enterprise’s chief information officer (CIO) contemplating a move to the cloud. The cost and agility benefits offered by cloud deployments make it an attractive option for the organization. It allows the IT group to focus its limited resources on the core business of the company, enabling it to fund and undertake new projects with business impact.