The traditional way of software development is cumbersome — a developer writes code, builds, and runs it in one environment and it functions as intended. However, it may fail with errors and defects when shipped and run in another environment. Containerization is the encapsulation of an application and its required environment, and addresses this problem using containers/dockers. This helps organizations modernize legacy applications and create new cloud-native applications that are both scalable and agile.

Container engines such as Docker, and frameworks such as Kubernetes, provide a standardized way to package applications — including the code, runtime, and libraries. This enables them to run in a consistent manner across their entire software development life cycle.

Containers are continuing to increase in popularity and demand as they provide a powerful tool to address several development concerns — including the need for faster delivery, agility, portability, modernization, and life cycle management.

How do containers work?

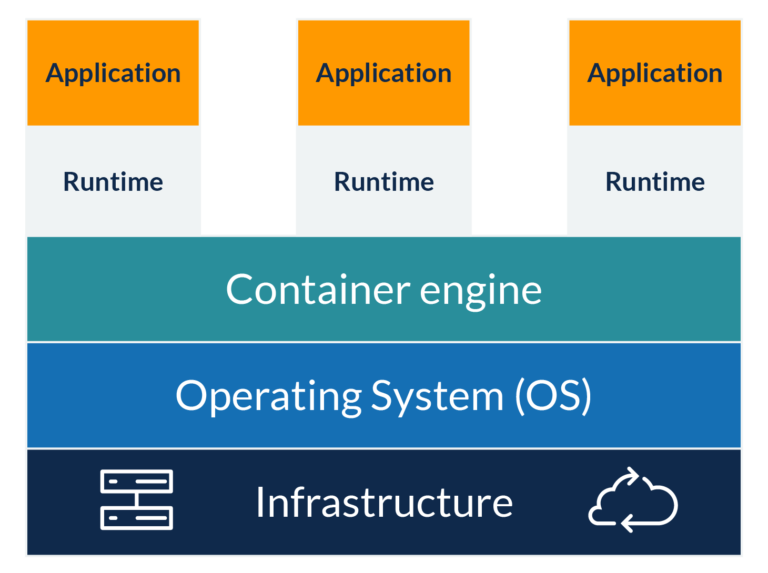

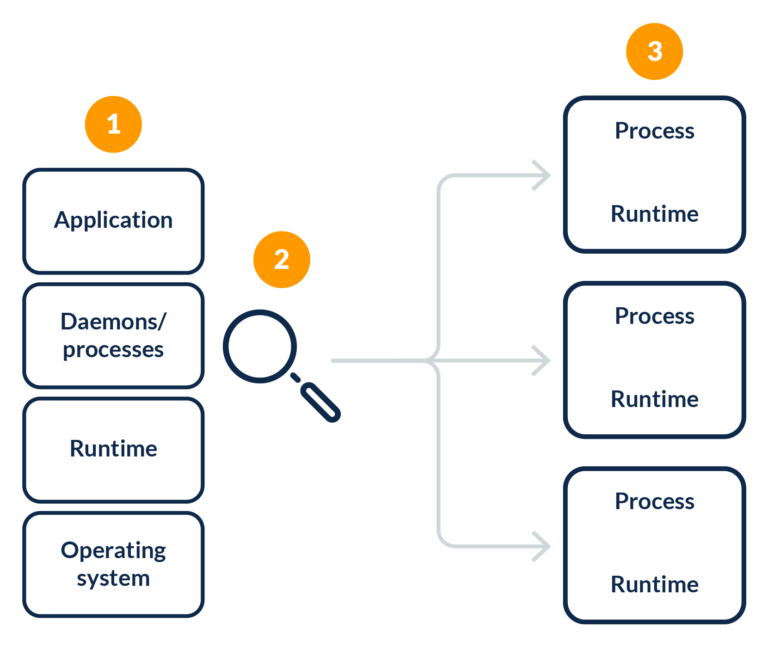

Containers abstract the application platform, its dependencies, and the underlying infrastructure. In a nutshell, a container bundles the runtime environment, application, libraries, binaries, and configuration files to run in an efficient and bug-free way across different computing environments. Refer to the diagram below for a depiction of high-level container architecture.